Technical foundations of an agentic system

Never heard of Agentic AI? Check our previous post What to expect from Agentic AI? to grap some core ideas behind agentic AI or let us to show you our concept of Agentic AI processing for bottling line Concept for controlling a bottling line with Agentic AI.

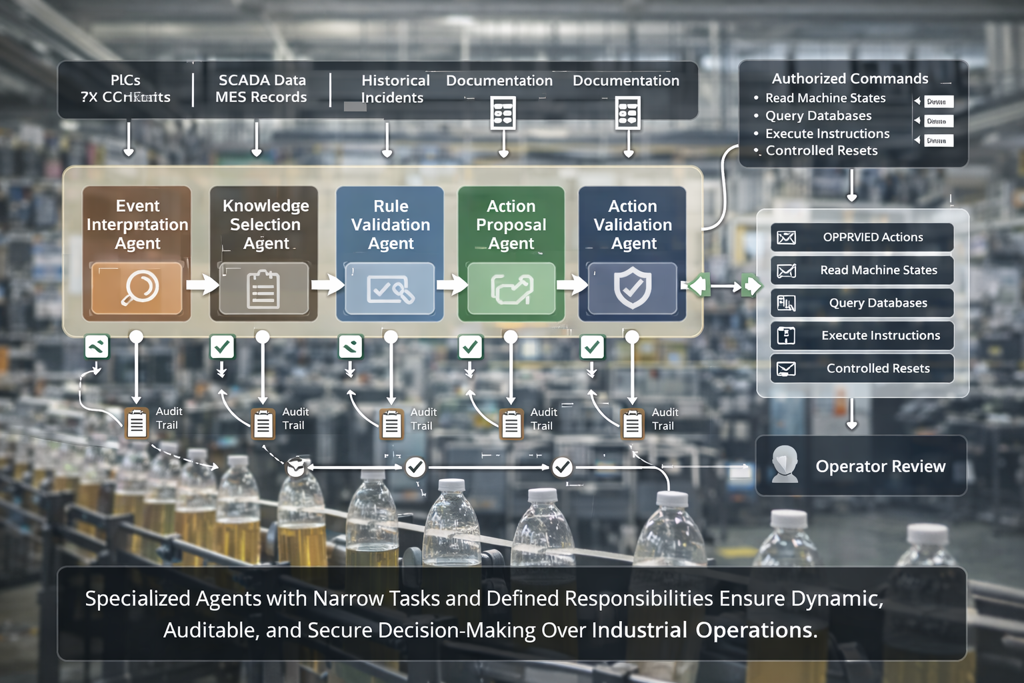

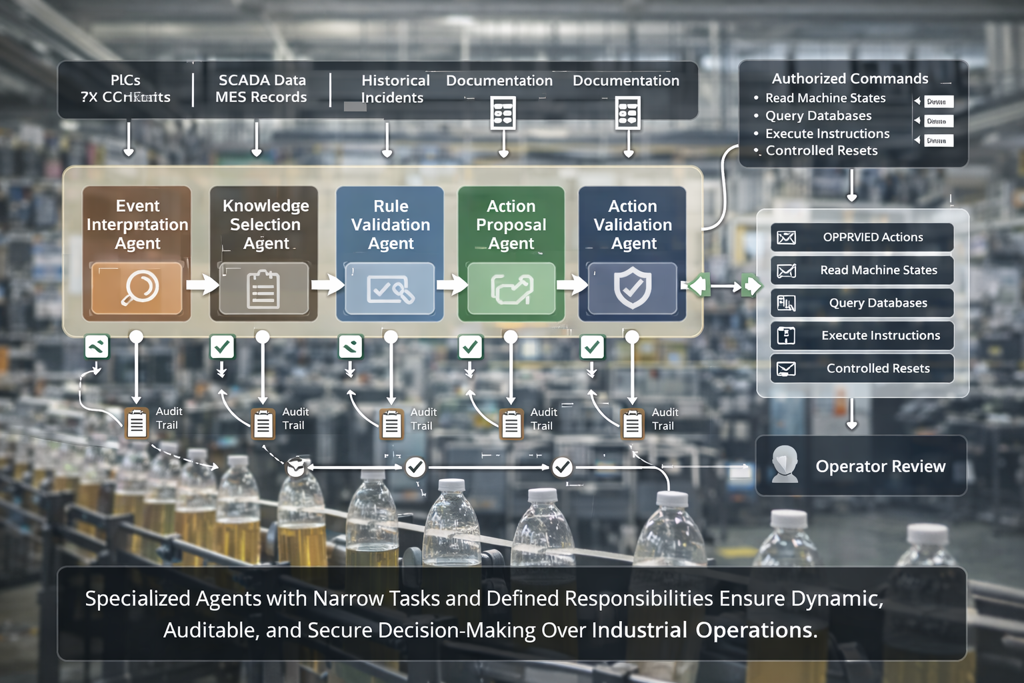

An agentic system in an industrial setting operates as a coordinated set of specialised agents rather than a single model acting alone. It begins by gathering context from PLCs, SCADA data, MES records, historical incidents, and internal documentation. This information forms a continuously updated operational picture that allows the system to recognise deviations, correlate them with known patterns, and determine whether a new event requires action. Knowledge is not static; it is assembled dynamically from the plant’s own data and technical sources.

The core decision-making process is structured as a multi-agent flow. Each agent has a defined responsibility, such as interpreting the event, selecting relevant knowledge sources, performing rule checks, or validating proposed actions. Behind each agent is an SLM (Small Language Model) or similar neural-network component that analyses the specific input for that stage and produces a controlled, step-wise output. Because every agent handles only a narrow task, the overall system behaves more predictably, is easier to audit, and is less sensitive to unexpected input. Outputs are verified at each step, and no decision is passed forward without satisfying policy and consistency checks.

When an action is required, the system uses only authorised interfaces to interact with the environment. It can read machine states, query databases, or execute predefined commands such as controlled resets or diagnostic routines. Command execution is subject to an additional verification layer: an action-validation agent checks whether parameters fall within approved limits, whether the line’s current state matches expected conditions, and whether human confirmation is mandatory. If any inconsistency appears, the sequence stops and is escalated to an operator with a clear explanation of the reasoning and the point of failure.

Security, oversight, and auditability are built into every stage of the architecture. Access to APIs is strictly scoped, write actions are separated from read capabilities, and sensitive operations can require explicit human approval. Each agent’s output, the reasoning behind decisions, and all executed commands are recorded in an audit trail that can be reviewed by engineering, operations, or compliance teams. These guardrails ensure that even though the system uses language processing and neural-network reasoning to identify likely root causes and suggest actions, every step remains controlled, transparent, and aligned with established industrial safety expectations.

Do you want to find about knowledge agents are using, let us introduce our SenseDrive.ai tool.

Are you interested, but not sure where to start, get in touch.